The change acceptance board (CAB) was one branch of the reliability journey from DevOps Takes Practice: How New Relic Evolves Reliability. Matthew Flaming & Beth Adele Long at New Relic's 2017 FutureStack conference in London. youtube ![]()

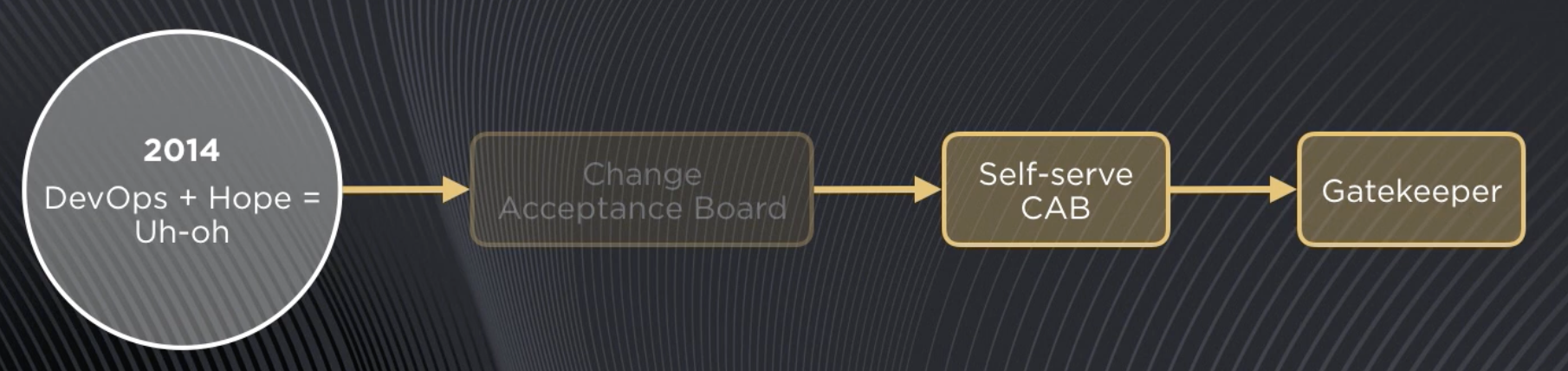

Evolution of the Change Acceptance Board at New Relic.

It's useful to look at the example of our change acceptance board, the CAB, which we introduced at this point in time.

YOUTUBE FX03FnvIE0g

START 488

The change acceptance board (CAB) was one branch of the reliability journey from DevOps Takes Practice: How New Relic Evolves Reliability. Matthew Flaming & Beth Adele Long at New Relic's 2017 FutureStack conference in London.

youtube ![]()

# First incarnation

In its first incarnation this [was] going into a dimly lit room with a couple of architects and trying to convince them that your your changes were safe to release.

This did in fact help us catch some risky releases, some potentially conflicting deploys, and maybe, most importantly, it helped teams slow down and think more conscientiously about the risk their changes might introduce.

All of that's good.

But as you might imagine, [it was] not exactly popular with engineers. We also found pretty quickly that, over time, our releases were getting bigger and bigger as changes piled up behind the CAB

In fact, ironically we had increased risk through the law of unintended consequences.

# Second incarnation

What if we try an experiment? What if we make the CAB partially self-serve? We built a tool around a calendaring system that would let engineers see other scheduled releases and submit a request for their own release slot along with an evaluation of risk: low risk => auto-approval, high risk => get sent to the architects for a review.

This is progress.

# Third incarnation

But we realized we still weren't solving just the core problem. What we were worried about wasn't really code quality issues. What we were worried about were people not being being aware of the context in which their release was occurring, or taking appropriate mitigation steps.

So we went one step further and developed a tool that we call gatekeeper. Gatekeeper is essentially a pre-flight checker for releases. It makes sure that a release's dependencies are healthy, the preconditions are met, and if something is wrong generates warnings, and also creates visibility around other releases.

So now teams can just include gatekeeper in their deploy pipeline and they can make autonomous decisions. They can be smart on their own about whether it's safe to release or whether they want to hold off. They have visibility into the whole system and how their change is going to impact it.

# Recommendation

It's so important to just keep focusing in on the core problem you're trying to solve and strip out everything around that that could be generating friction or frustration or disempowering people to move quickly.

.

New Relic's specific story is nicely told here. We are aware of seven specific software companies that have adopted a change approval board after embarrassing moments of poor reliability.

DORA research finds that in most cases CABs negatively impact the change fail rate.podcast ![]()

> What our research has shown through collecting data over the years, is that while they do exist on the whole, an external change approval body will slow you down. > > That's no surprise. So your change lead time is going to increase, your deployment frequency will decrease. > > But, at best, they have zero impact on your change fail rate. In most cases, they have a negative impact on your change fail rate. So you're failing more often.