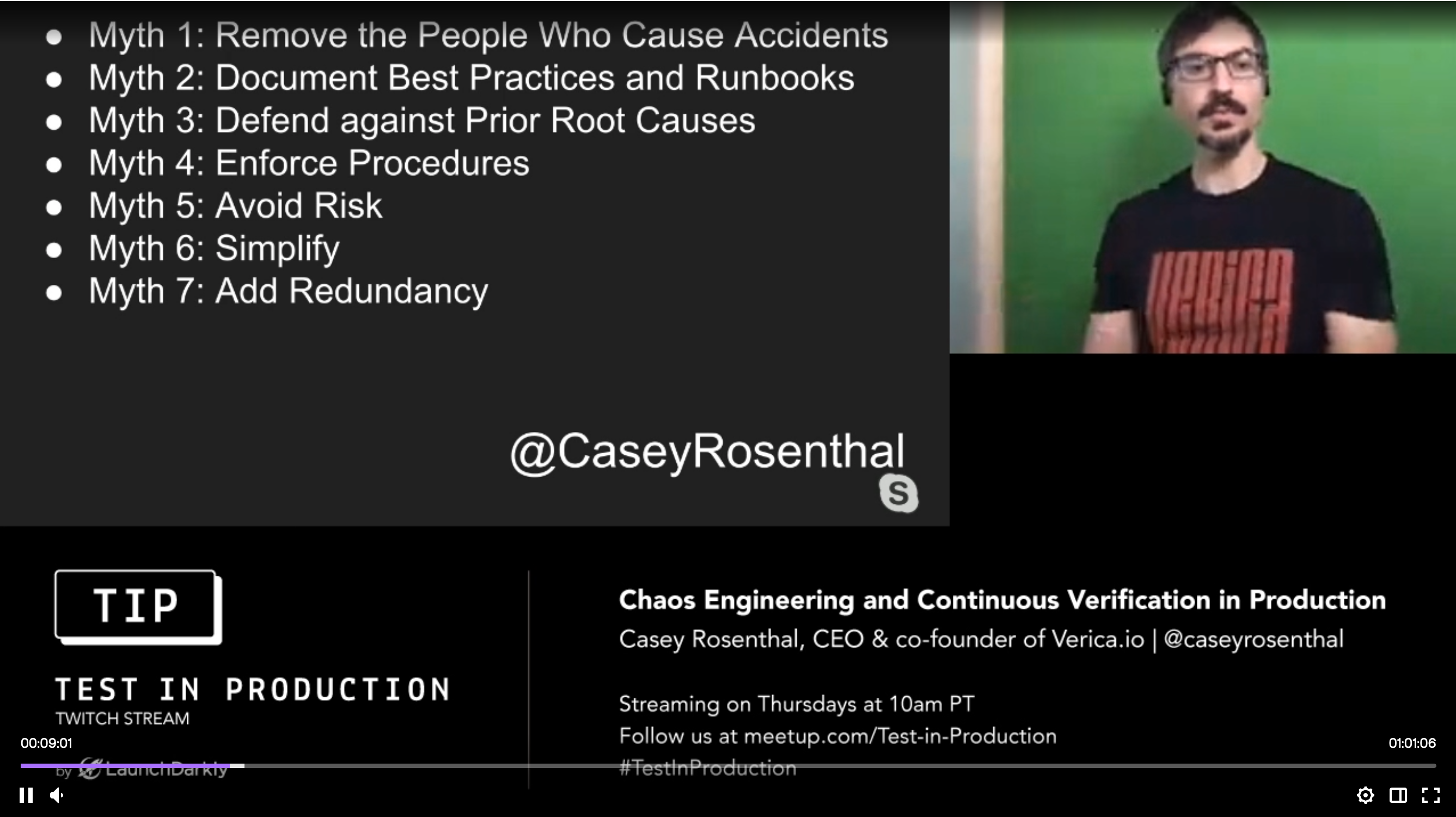

Casey Rosenthal and Nora Jones have written a new book on Chaos Engineering with the help of many guest authors. Casey gave a talk on Test In Production which characterizes ways chaos engineering can help software organizations reframe their approach to building reliable systems. In the first ten minutes of the talk he sets out 7 myths of reliability common in current practice, and follows that with two different mental models that help with reframing. book ![]() talk

talk ![]()

talk

Book cover and slide from talk

In the talk, Casey presents seven common practices in the software industry that our intuitions lead us to believe will make our systems more reliable. While presenting each one he makes a reasonable case for the ways in which each notion fails to deliver the reliability it seeks to create.

Myth 1: Remove the People Who Cause Accidents

Myth 2: Document Best Practices and Runbooks

Myth 3: Defend against Prior Root Causes

Myth 4: Enforce Procedures

Myth 5: Avoid Risk

Myth 6: Simplicity

Myth 7: Add Redundancy

Casey is careful in his use of the words reliability, robustness, and resilience. Robustness is the ability of a complex system to sustain and adapt to known loads and pressures. Resilience, on the other hand, is all about how humans improvise in an incident when reality has surprised us by exceeding the limits of our automation in some new way. Reliability of the system requires both robustness and resilience.

Classic examples of automation creating robustness are automated testing, circuit breakers, auto-scaling.

One example Casey offers of tooling that supports resilience is feature flags. They allow the engineers to reverse a decision quickly if the new feature is discovered to be causing harm in some way.

Especially liked the Q&A related to Myth 2 starting 24 min into the video. Capturing some of that back-and-forth here... want to edit it down to something more readable.

Host: one of your myths hits me personally... i see documenting best practice and runbooks is vital

Casey: Resilience is having adaptive capacity to handle incidents. That capacity requires humans that have skills and context to know how to improvise. A runbook doesn't have that, even when well documented. The person following the runbook must know even if this is the right runbook to be following on the circumstances. You cannot move the needle on reliability via runbooks. Worth investing in better docs but doesn't move the needle.

Host: Checklist Manifesto—rigorously adhering to checklists in crisis situations.

Casey: using a tool that you have experience with to help get a job done is great. That's different from a runbook as a remediation tactic. A pilot going through a checklist before takeoff helps bring your experience forward.

Automation can help with robustness, but automation cannot help with improvisation.

Host: self-healing systems—specifically references monitoring and alerting companies.

Casey: you can raise the floor of robustness. Consider circuit breakers and similar patterns. Even alerting is a primmative self-healing for bringing humans into a problem. These still lack the background and context part that humans bring in improvisation. Defining quality of complex system is that it can't fit in a single engineer's head. By definition, self-healing can only cope with the conditions that the engineers can think of ahead of time. Chaos eng. by contrast helps the humans get the experience that helps them learn and improve their improvisation, to discover things they did not already know.

There is always a boundary to the robustness. Resilience is the part where humans adapt the system when the boundaries are crossed.

We software engineers cope with complex systems everywhere but we somehow don't like to see it in our work.

book

My favorite chapter in the book is Nora’s chapter Creating Foresight which opens the second section focused on human factors.

She tells the tale of the long journey at Netflix, creating ChAP and Monocle—arguably the best-in-class chaos eng. tools in the industry, that we know of—and how they ultimately turned off the automation a couple weeks after they’d finished it.

The journey of creating that automation was a fantastic tool to build the expertise they needed and it turned out to be the expertise that was of value to the business, not the automation.

It also explains the rationale behind her current project which is not an automated chaos engineering tool, despite her years of experience building exactly that kind of tooling. She describes her current work on incident analysis as motivated by her experience in chaos engineering.

Deeply understanding incidents informs what areas of the system are unknown or misunderstood and guide the design of chaos experiments that will create foresight from those unknowns.

Jason Cahoon wrote a chapter about Google’s DiRT that situates chaos engineering as a natural extension of the good-old practice of disaster recovery.

Logan Rosen wrote a chapter about how LinkedIn enables chaos engineering during development with a clever combination of distributed tracing and their own home-grown tool for adding chaos on the client side of a connection. Our own chaos panda would enable us to build this same tooling for ourselves.

There was a fun common bit of advice in these two chapters from Google & LinkedIn, echoed in another chapter from a Microsoft engineer that all suggested focusing experiments on network latency. There are many other ways that our components can fail, but the advice from all of these folks indicated that latency experiments uncover the vast majority of unexpected systemic behaviors.

There’s a whole section on human factors that is deeply informed by the Resilience Engineering community. Nora Jones chapter in the book is here.

At work the approach my team and I have taken in preparing for a production gameday in April was very much drawn from learning from incidents. We took time to have conversations with 13 different teams about how specific new latency in our highest volume and most widely shared databases will effect their systems. The conversations themselves help everyone re-evaluate their mental models of how systems are interconnected and what unexpected behaviors we have encountered before in hopes of predicting what might happen.

That is the learning.

We were all well calibrated to our respective systems and knew where to expect to look for signals of potential customer impact. We were primed to verify how well we understand our own systems. Should any symptoms of impact have appeared we would be able to verify our mitigation processes.

Learning from incidents creates a feedback loop with chaos experiments that grows into a culture of continuous learning, continually informing and guiding all of the hard prioritization decisions as a software business.

.

This page wants to be expanded into its own wiki, I think.

Separate these concerns

Book

Casey's Talk

Unpack the Myths — maybe each gets a page

Include the Q&A part of the talk that asked about Checklist Manifesto in response to Myth 2 Best Practices and Runbooks.

Experience report from production chaos

Nora's chapter emphasizing experiment design

Relationship to learning from incidents, including the "Incident Analysis and Chaos Engineering" blog by Ryan Franz: blog ![]()

Connect the dots to exploratory testing. See Explore It!

Connect the dots to exploration as a model for learning.

See also other Chaos Engineering pages in the federation.

Chaos Engineering is a philosophy of testing that tackles systemic uncertainty head-on. Chaos as a discipline is changing the way the industry builds complex distributed systems. pnsqc ![]()